ML Research Engineer with 8+ years of research and industry experience in developing AI solutions. I have expertise in large language models (LLMs), agentic systems, retrieval-augmented generation (RAG), prompt optimization, and multi-modal learning. I have a proven track record of leading AI projects from R&D to deployment at Google Research, RBC Borealis, and Vector Institute. I have experience building intelligent agents and enterprise AI applications that automate complex workflows, improve efficiency, and drive measurable business impact in regulated environments. My research excellence includes publications in top-tier venues, including ICLR, AAAI, TMLR, and ICASSP.

Email | CV | Google Scholar | LinkedIn | Github

Experiences

- Senior Machine Learning Engineer

- Working on AI agents for enterprise workflow automation.

- Machine Learning Research Engineer II

- Designed agentic solutions to automate complex workflows while ensuring compliance with regulations and pre-defined procedures.

- Built comprehensive evaluation stacks for AI agents using virtual clients, agent-to-agent protocols, and internal state evaluation to ensure reliability and performance of multi-agent systems with tool use capability.

- Developed a call transcript analysis application to identify advisor workflow pain points and time-consuming tasks; built agentic systems for open-ended question answering, fact-finding, analysis & visualization — enabling workflow automation, higher efficiency, improved client satisfaction, and increased profitability.

- Building enterprise AI applications using LLMs and agent-based systems – Developed an LLM-based AI trainer for new and existing advisors to coach them in generating more revenue by resolving client issues and recommending relevant products and services.

- Machine Learning Research Intern

- Developed an efficient LLM inference pipeline, reducing latency by 90% while improving performance by 3.5 points.

- Optimized foundation models for real-time AI applications, enhancing scalability and robustness in production.

- Proposed a new method for improving reasoning of smaller language models, published in NeurIPS'25-W.

- Applied Machine Learning Intern

- Led development of a multi-modal foundation model for healthcare, reducing annotation costs with limited paired data - GitHub.

- Built a framework for training multi-modal models and benchmarked methods for optimal performance - GitHub.

- Proposed a novel few-shot tuning approach for vision-language models, published in TMLR’25.

- Achieved state-of-the-art performance in medical foundation models, resulting in five publications.

- Student Researcher

- Designed a strategic sampling method for self-supervised learning, cutting training costs by 80% and boosting accuracy by 2% on IMU-based activity recognition.

- Developed a few-shot class-incremental learning framework, enhancing adaptability, published in TMLR’24.

- Applied ML Researcher

- Designed and deployed large-scale ML systems for personalized recommendations, increasing user engagement by 15%.

- Built high-performance ML models for churn prediction and usage drop detection, achieving 85% accuracy.

- Developed end-to-end ML pipelines, covering data collection, labeling, validation, model development, deployment, and monitoring.

- Jr. Software Engineer

- Developed and deployed LLM-powered chatbots with retrieval-augmented generation (RAG), improving context awareness and response relevance.

- Contributed to one of the first LLMs for Bengali, including spell and grammar correction features.

Roper Technologies, Inc., Toronto, Canada (Remote), Apr 2026 - Present

RBC Borealis, Toronto, Canada, Aug 2025 - Apr 2026

RBC Borealis, Toronto, Canada, Jan 2025 - Aug 2025

Vector Institute for AI, Jan 2024 - Dec 2024

Google Research, May 2023 - Oct 2023

Robi Axiata Limited, Bangladesh, Nov 2019 - Jul 2021

REVE Systems Ltd., Mar 2019 - Oct 2019

Education

- Doctor of Philosophy

- Fast-tracked from Master's to Ph.D. for outstanding research contributions.

- Developed methods to train and deploy large foundation models using limited labelled data, enabling robust generalization under data scarcity and distribution shifts.

- Published 15+ papers in top-tier venues (e.g., ICLR, AAAI, TMLR).

- Mentored junior researchers and collaborated with industry partners (e.g., Workday, BMO).

- Master of Applied Science (MASc)

- Advanced to the Ph.D. program based on exceptional research performance before finalizing an MS thesis.

- Began research focus on computer vision applied to affective computing under Prof. Dr. Ali Etemad.

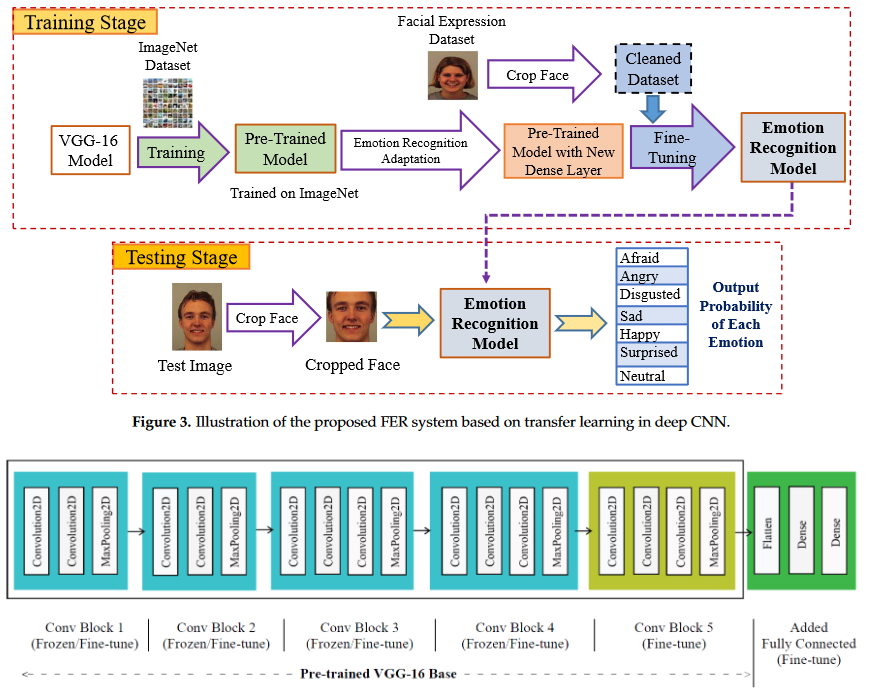

- BSc in Computer Science and Engineering

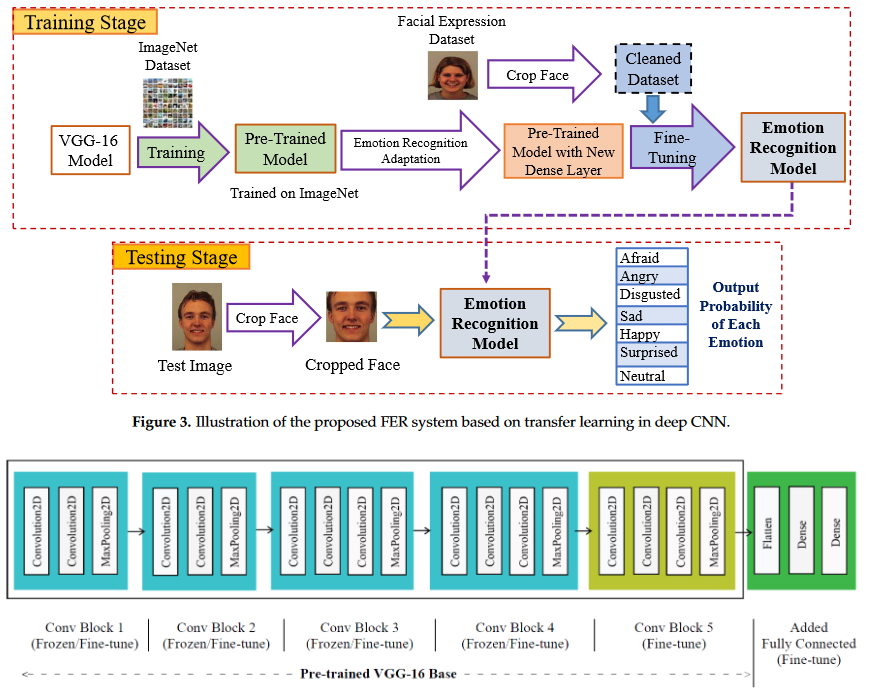

- Completed a 161.25-credit program with 40 courses, projects, and a thesis on facial emotion recognition using deep CNNs.

- Gained expertise in computer science principles, programming, software development, and research methodologies.

Dept. of Electrical and Computer Engineering, Queen's University, Canada, Sept 2020 - July 2025

Dept. of Electrical and Computer Engineering, Queen's University, Canada, Sept 2020 - Sept 2021

Khulna University of Engineering & Technology, Bangladesh, Jan 2015-Jan 2019

Research

|

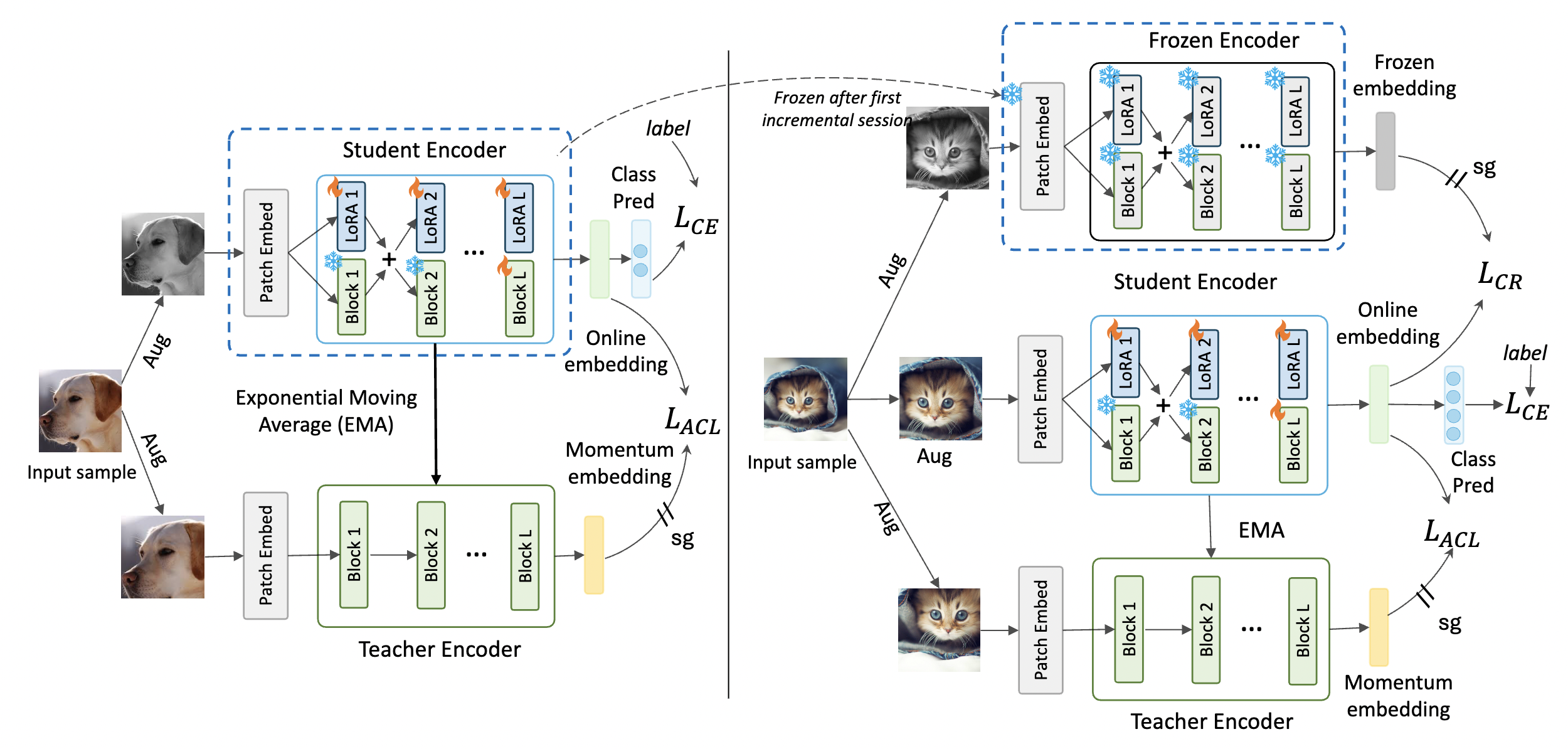

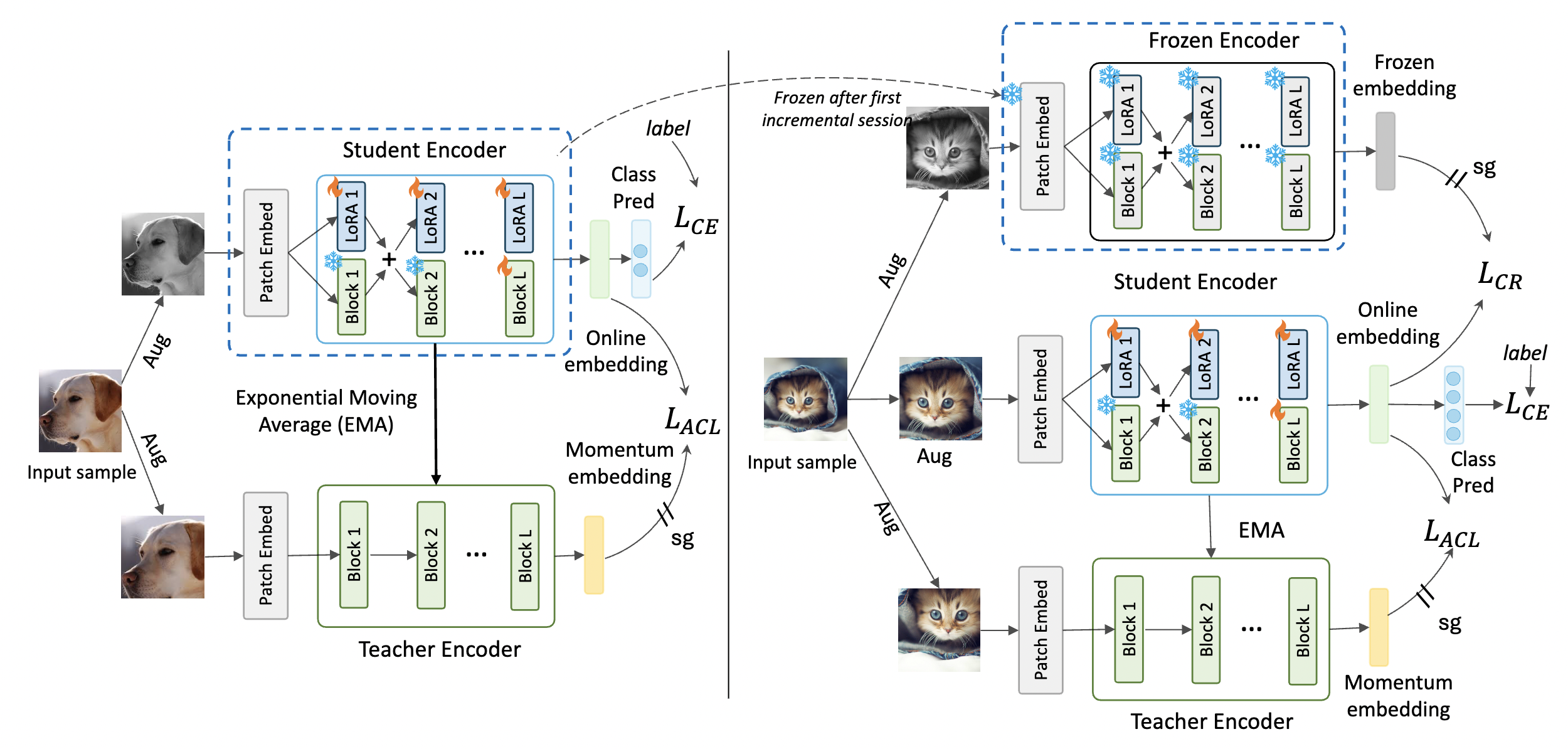

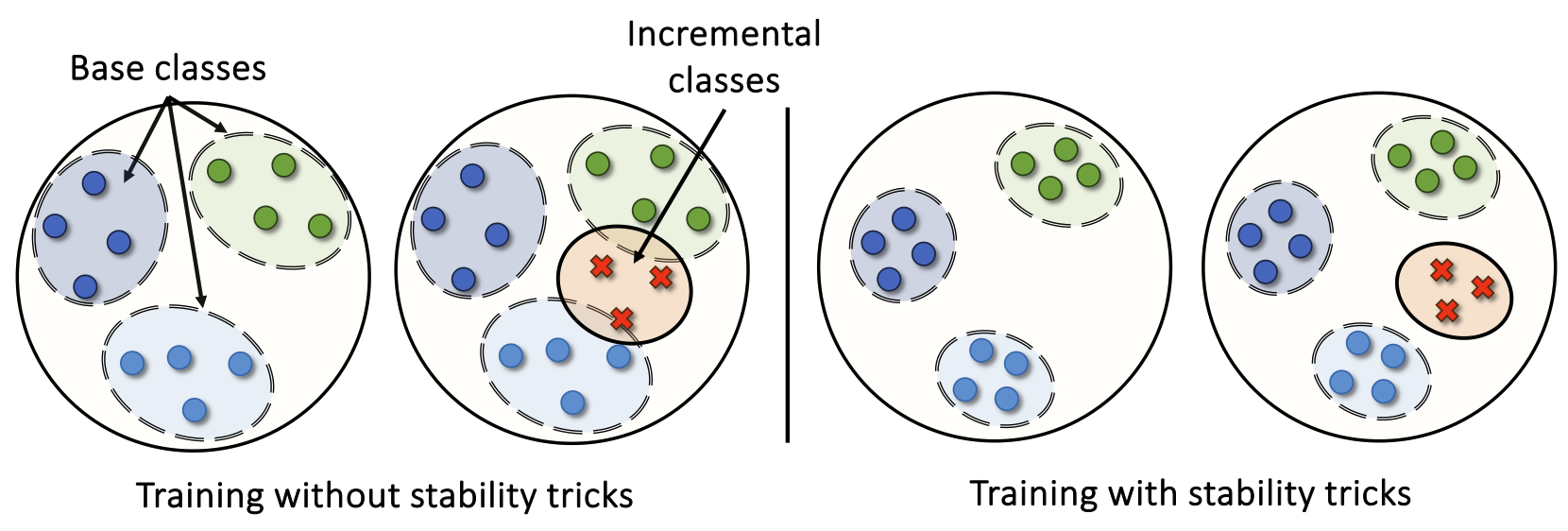

Consistency-Guided Asynchronous Contrastive Tuning for Few-Shot

Class-Incremental Tuning of Foundation Models

Shuvendu Roy, Elham Dolatabadi, Arash Afkanpour, Ali Etemad Transactions on Machine Learning Research (TMLR 2025) Paper | ArXiv | Code TLDR: We propose CoACT, a method for few-shot continual tuning of foundation models, using asynchronous contrastive tuning and consistency-guided regularization. It outperforms baselines by up to 12.51% in FSCIL and FSCIT across 16 datasets, with reduced forgetting and strong low-shot results. |

|

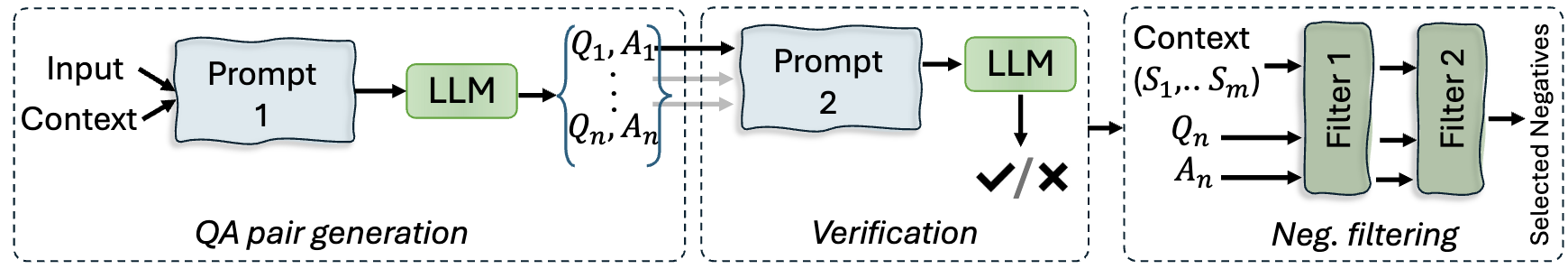

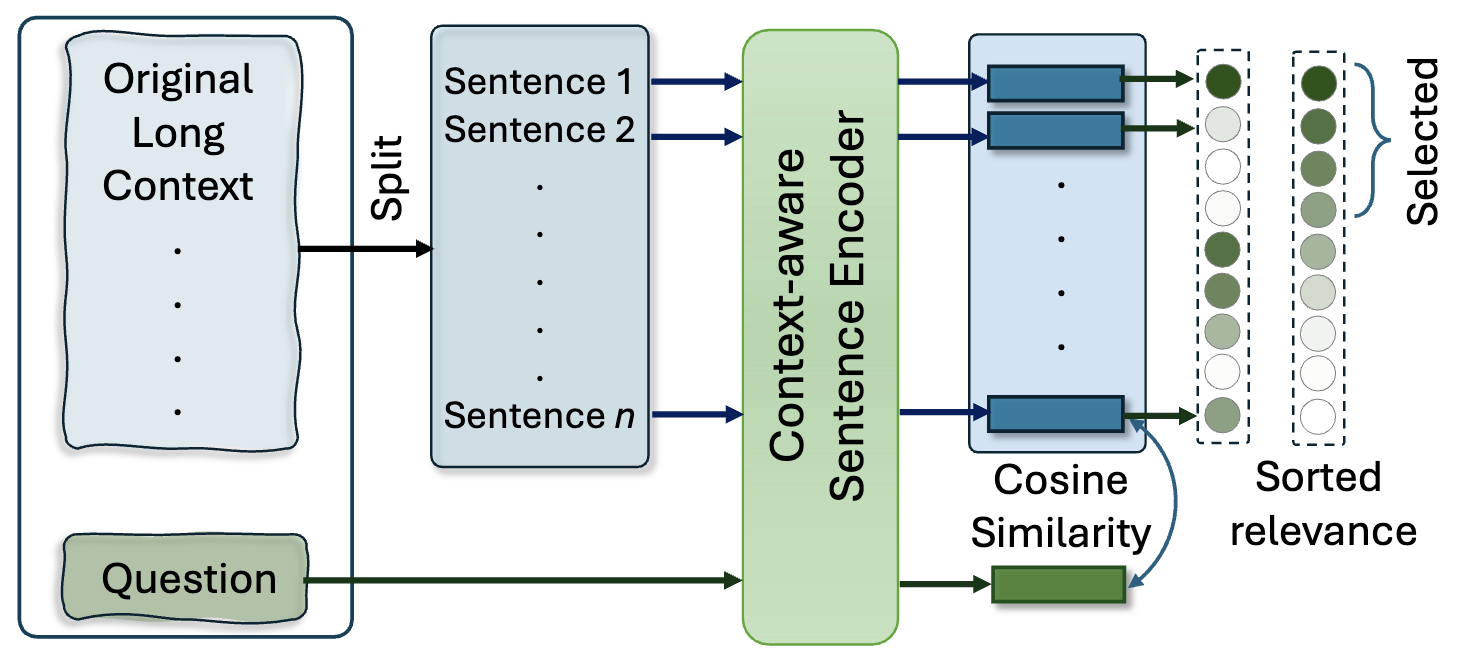

Prompt Compression with Context-Aware Sentence Encoding for Fast and

Improved LLM Inference

Barys Liskavets, Maxim Ushakov, Shuvendu Roy, Mark Klibanov, Ali Etemad, Shane Luke AAAI Conference on Artificial Intelligence (AAAI 2025) Paper | ArXiv | Code TLDR: We propose Context-Aware Prompt Compression (CPC), a sentence-level technique for compressing prompts to reduce computational costs while preserving relevant information for large language models (LLMs). CPC uses a novel context-aware sentence encoder, trained in a contrastive setup, to rank sentence relevance to a given question. It outperforms token-level compression methods on benchmarks, achieving up to 10.93x faster inference and better performance under shorter context constraints. |

|

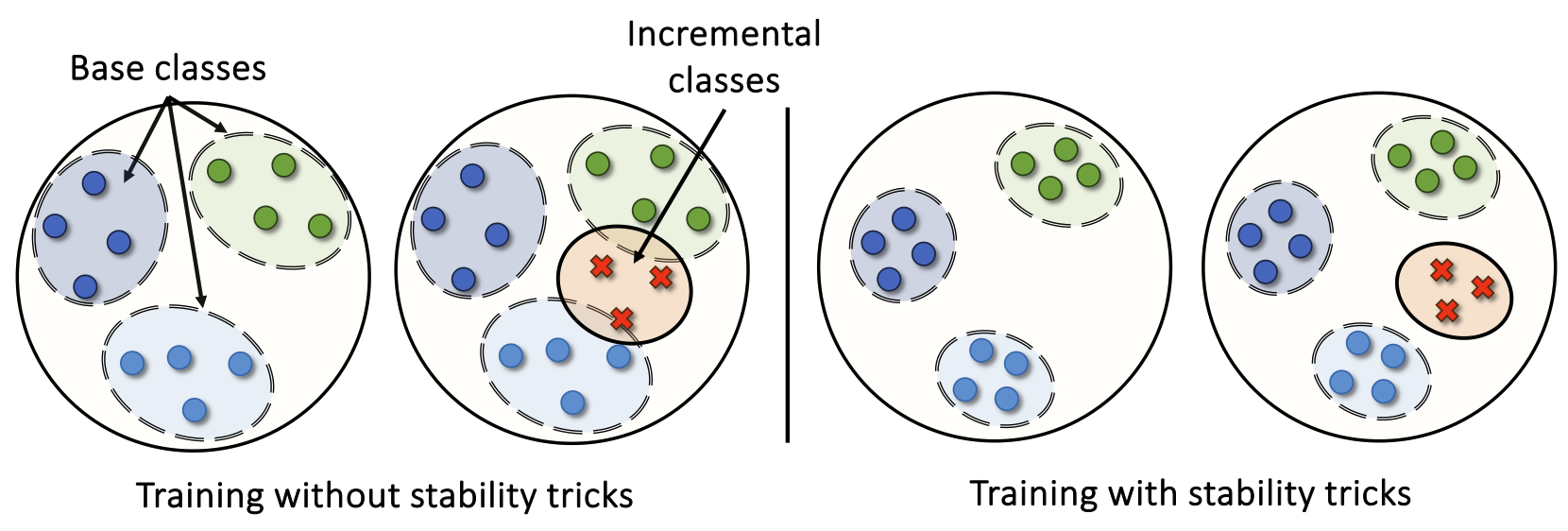

A Bag of Tricks for Few-Shot Class-Incremental Learning

Shuvendu Roy, Chunjong Park, Aldi Fahrezi, Ali Etemad Transactions on Machine Learning Research (TMLR 2024) Paper | ArXiv TLDR: We propose a unified bag-of-tricks framework for few-shot class-incremental learning (FSCIL), enhancing stability and adaptability to new tasks with limited samples. Organized into stability, adaptability, and training tricks, our approach mitigates forgetting, improves new class learning, and boosts overall performance. |

|

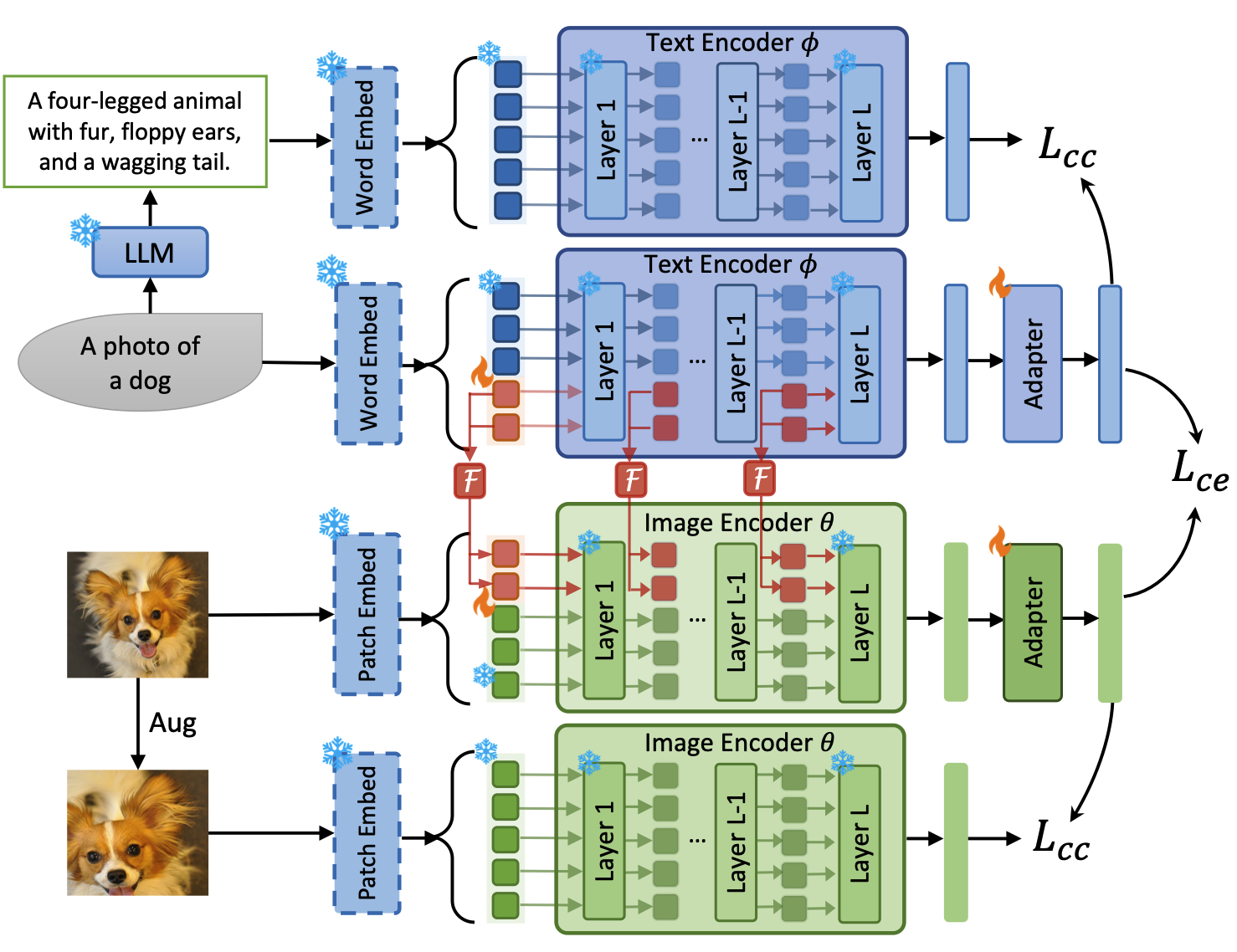

Consistency-guided Prompt Learning for Vision-Language

Models

International Conference on Learning Representations (ICLR 2024) Workshop version: Learning Through Consistency for Prompt Tuning NeurIPS 2023 Workshop on Robustness of Few-shot and Zero-shot Learning in Foundation Models (R0-FoMo). Shuvendu Roy, Ali Etemad Paper | ArXiv | Workshop Paper | Code TLDR: We propose Consistency-guided Prompt learning (CoPrompt), a fine-tuning method for vision-language models that enhances generalization in few-shot settings. CoPrompt prevents overfitting by enforcing a consistency constraint between the trainable and pre-trained models, incorporating perturbed inputs for regularization, and combining prompts with adapters for greater tuning flexibility. |

|

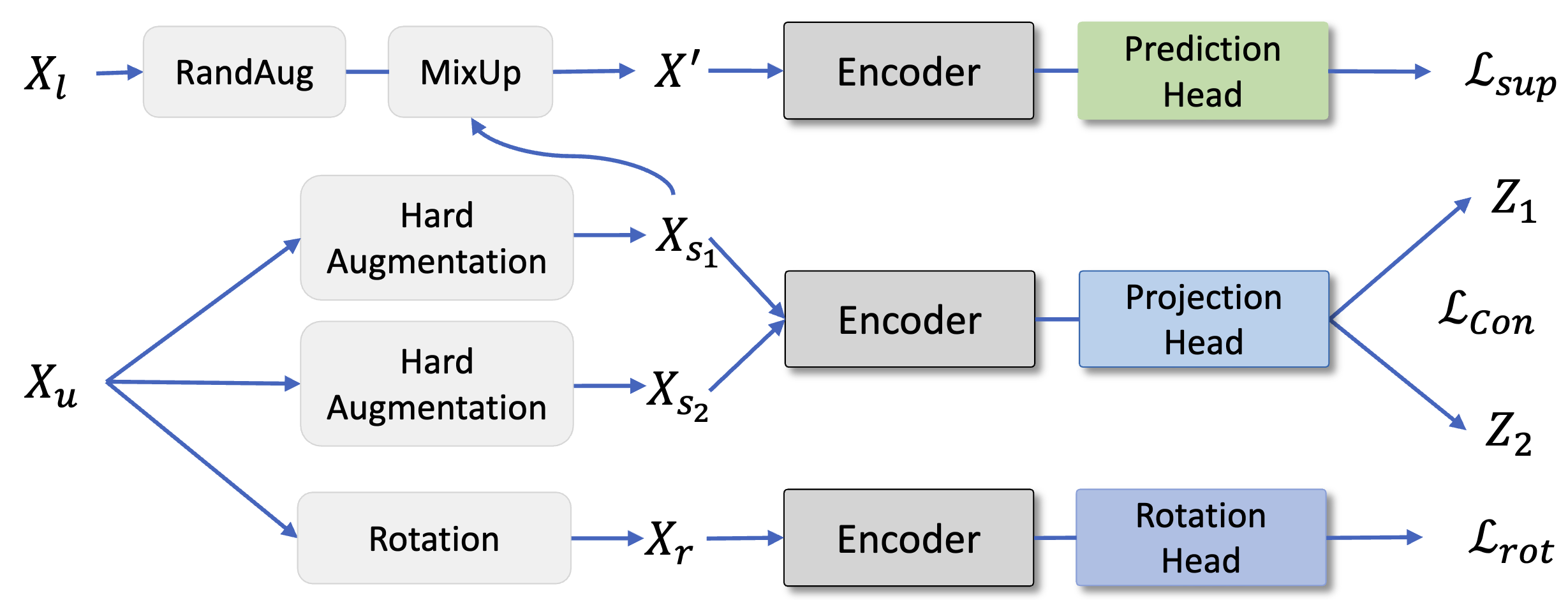

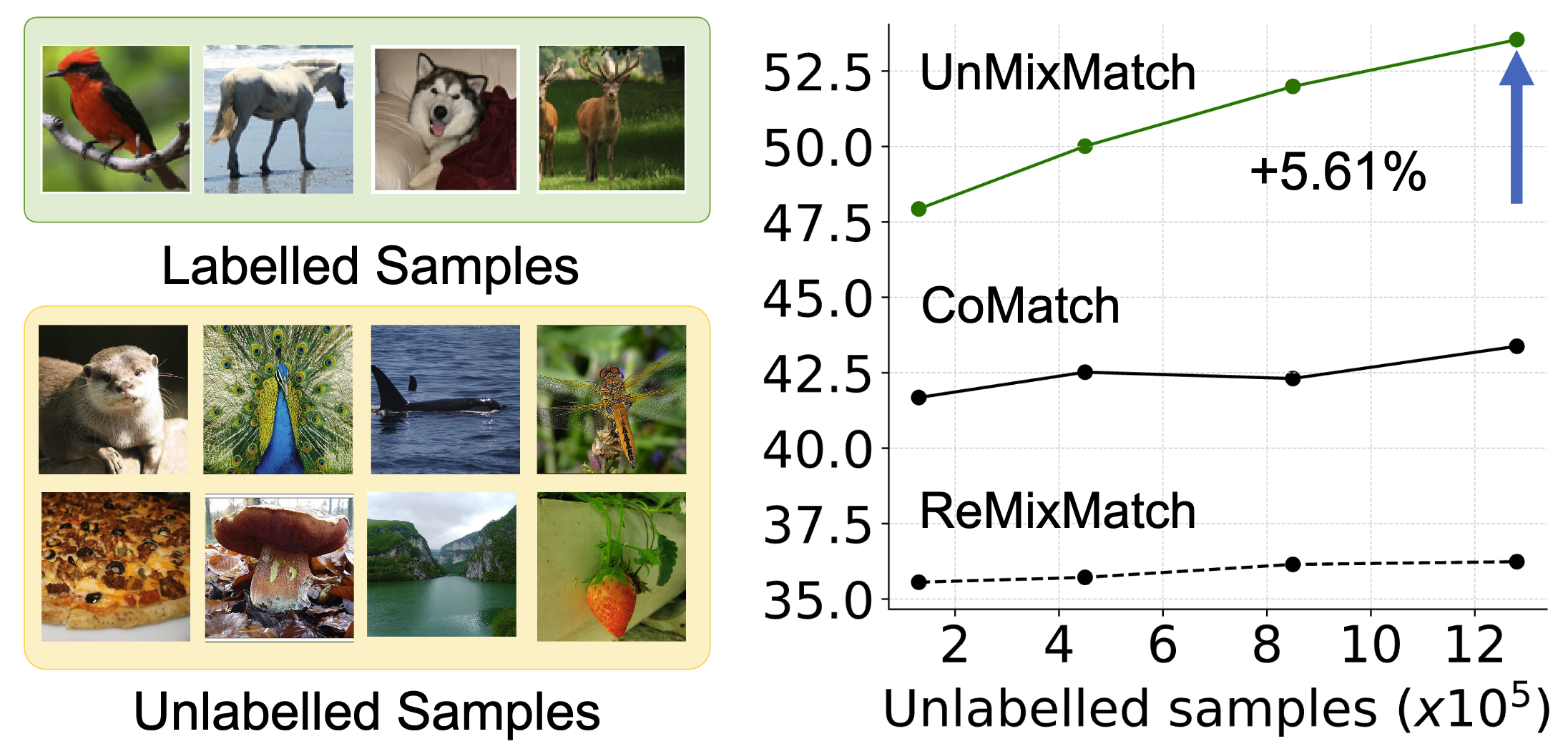

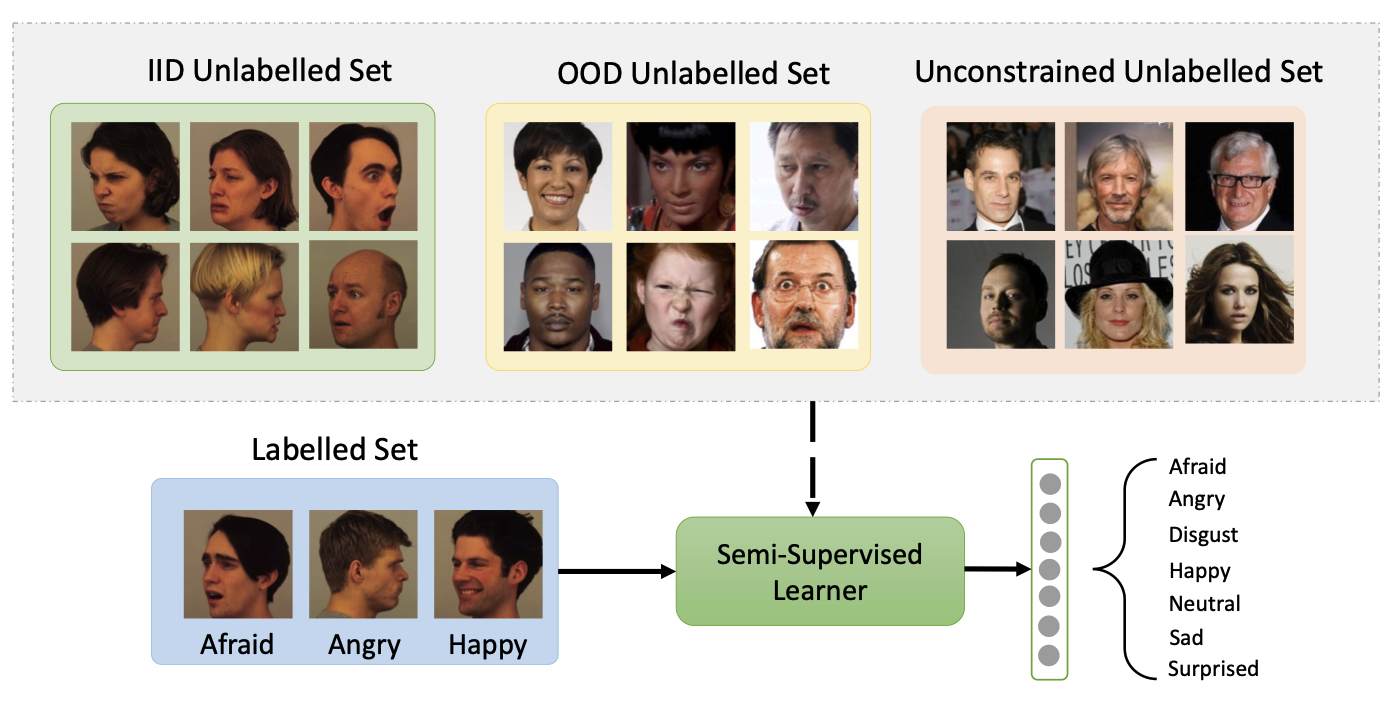

Scaling Up Semi-supervised Learning with Unconstrained Unlabelled

Data

AAAI Conference on Artificial Intelligence (AAAI 2024) Workshop version: Does Unconstrained Unlabeled Data Help Semi-Supervised Learning? NeurIPS 2023 Workshop: Self-Supervised Learning - Theory and Practice. Shuvendu Roy, Ali Etemad Paper | ArXiv | Workshop Paper | Code TLDR: We introduce UnMixMatch, a semi-supervised learning framework designed to leverage unconstrained unlabeled data to enhance scalability and generalizability. Unlike existing methods that assume labeled and unlabeled data come from the same distribution, UnMixMatch overcomes this limitation with three key components: a supervised learner with strong regularization, a contrastive consistency regularizer, and a self-supervised loss. |

|

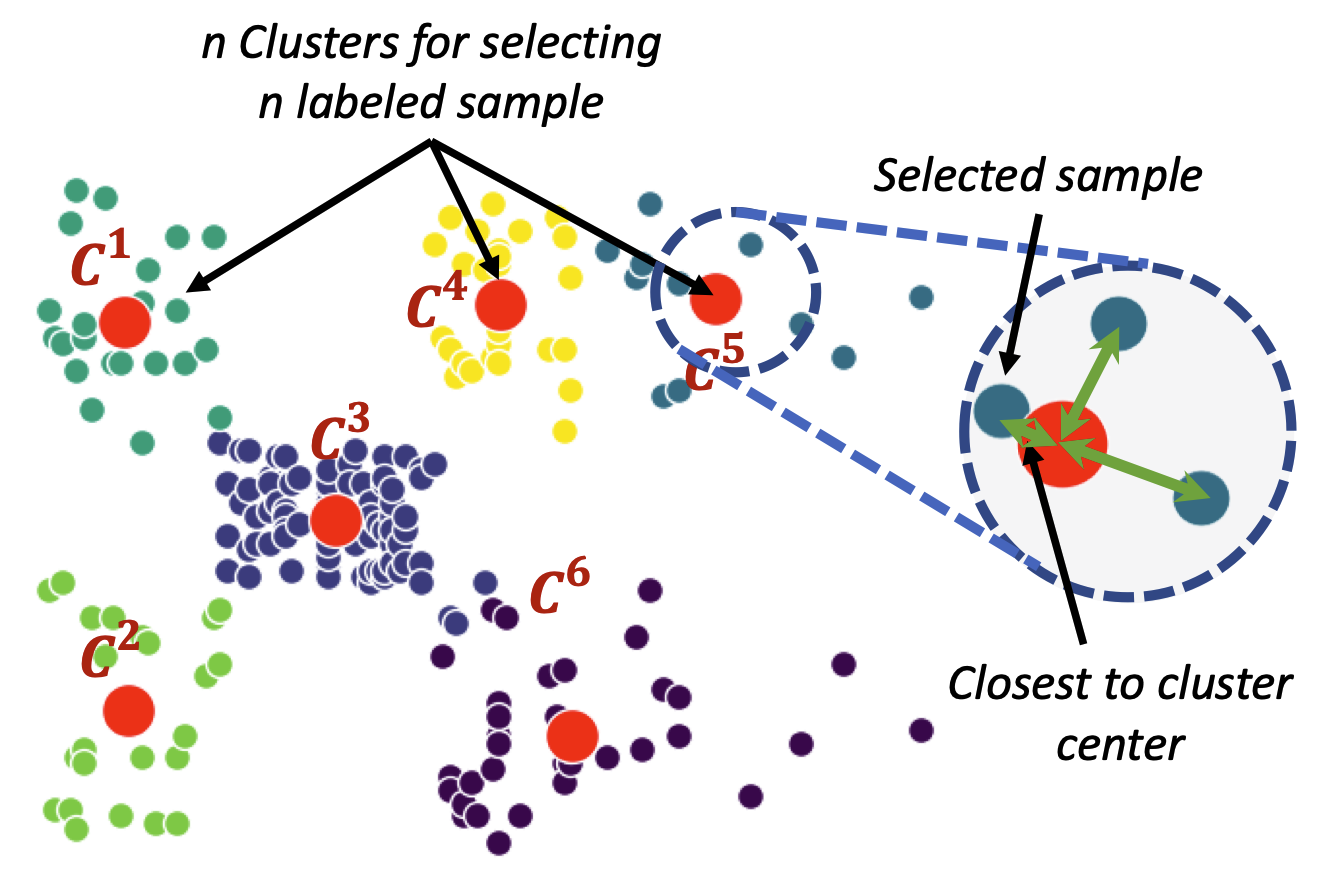

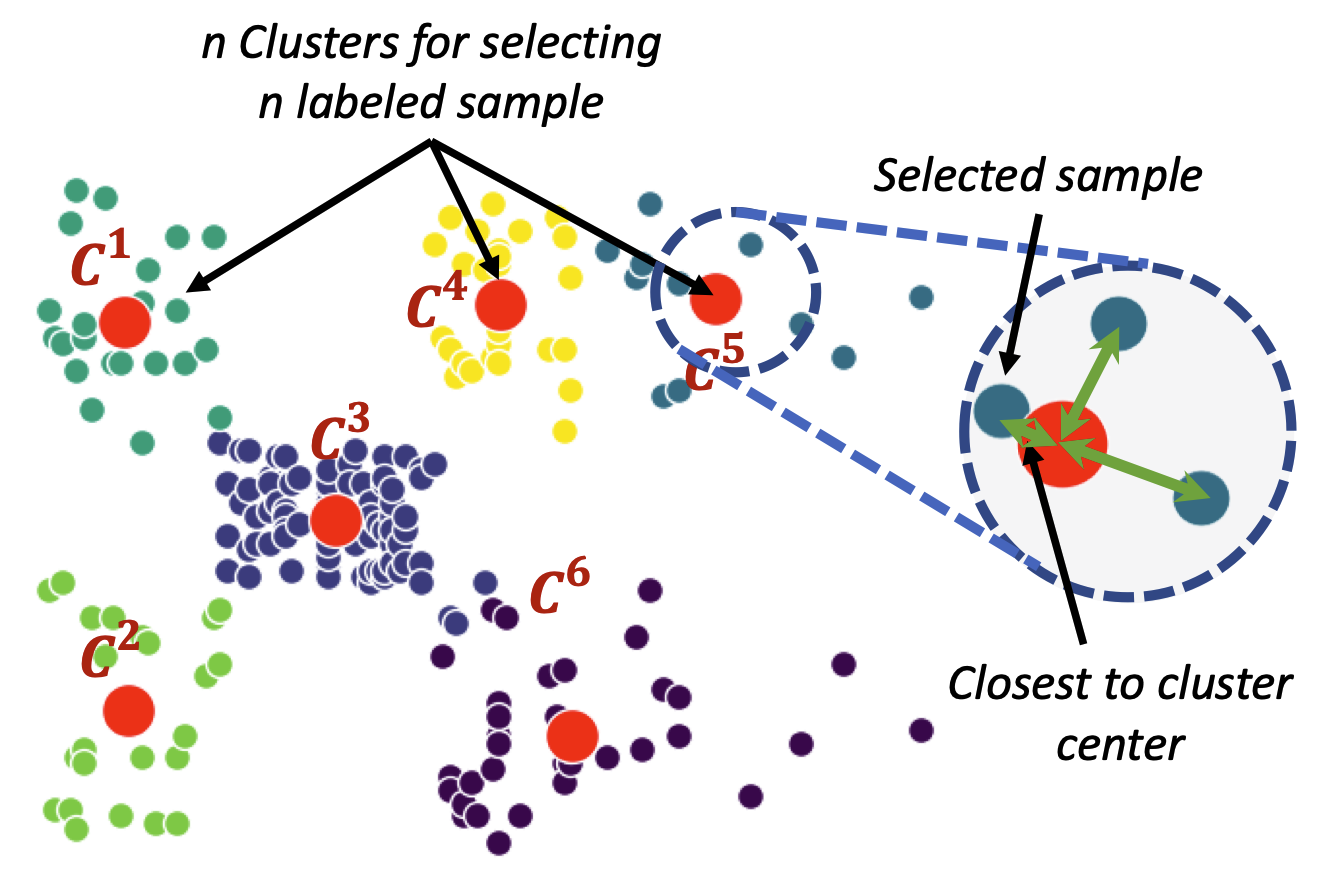

Impact of Strategic Sampling and Supervision Policies on

Semi-supervised Learning

Shuvendu Roy, Ali Etemad IEEE Transactions on Emerging Topics in Computational Intelligence (IEEE TETCI 2024) Paper | ArXiv TLDR: This study investigates the impact of labeled sample selection and usage in semi-supervised learning when labeled data is scarce. Selecting representative samples for labeling improves performance by up to 7.5% in low-label scenarios, while label injection strategies during training show minimal effect. |

|

Exploring the Boundaries of Semi-Supervised Facial Expression

Recognition using In-Distribution, Out-of-Distribution, and

Unconstrained Data

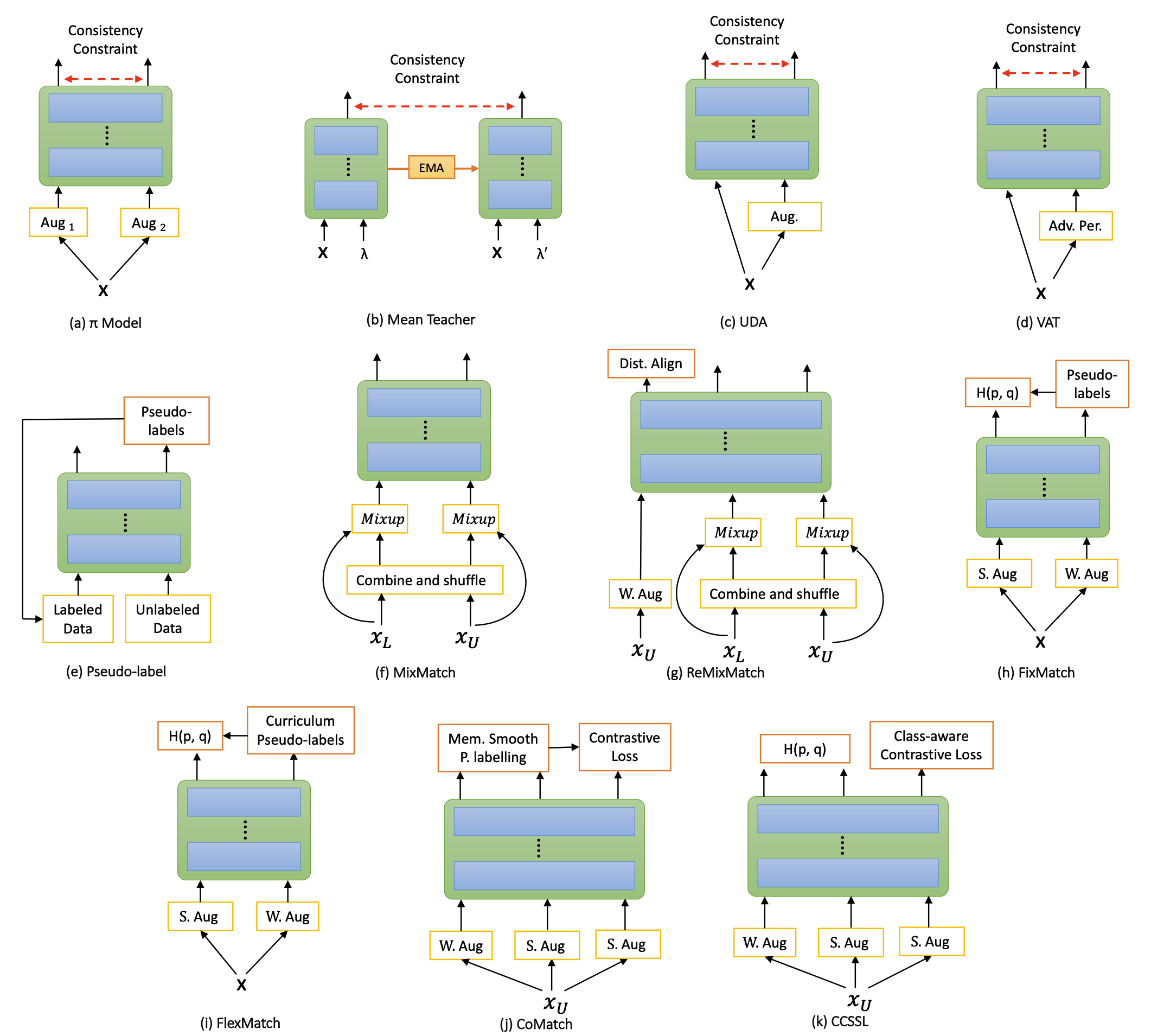

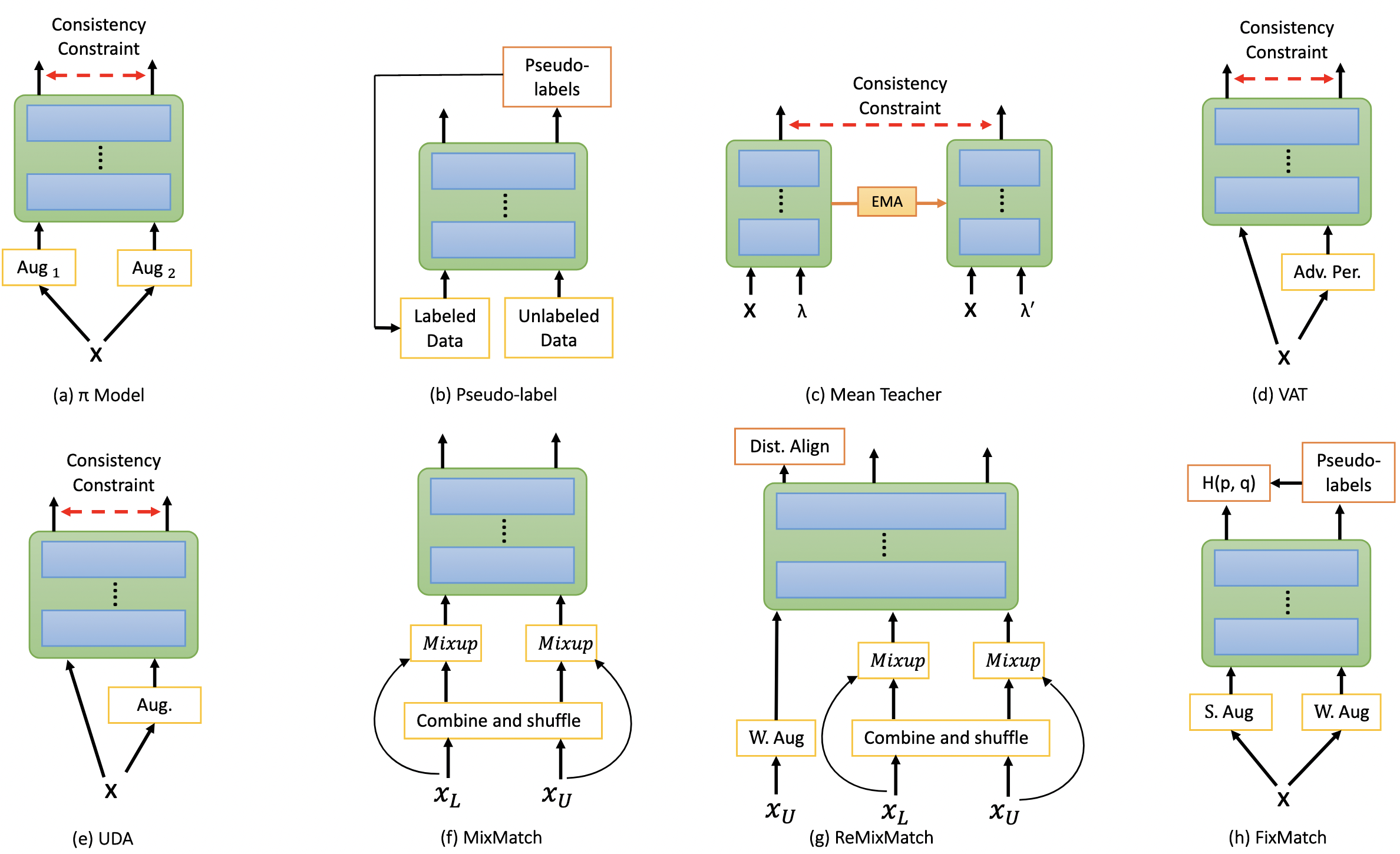

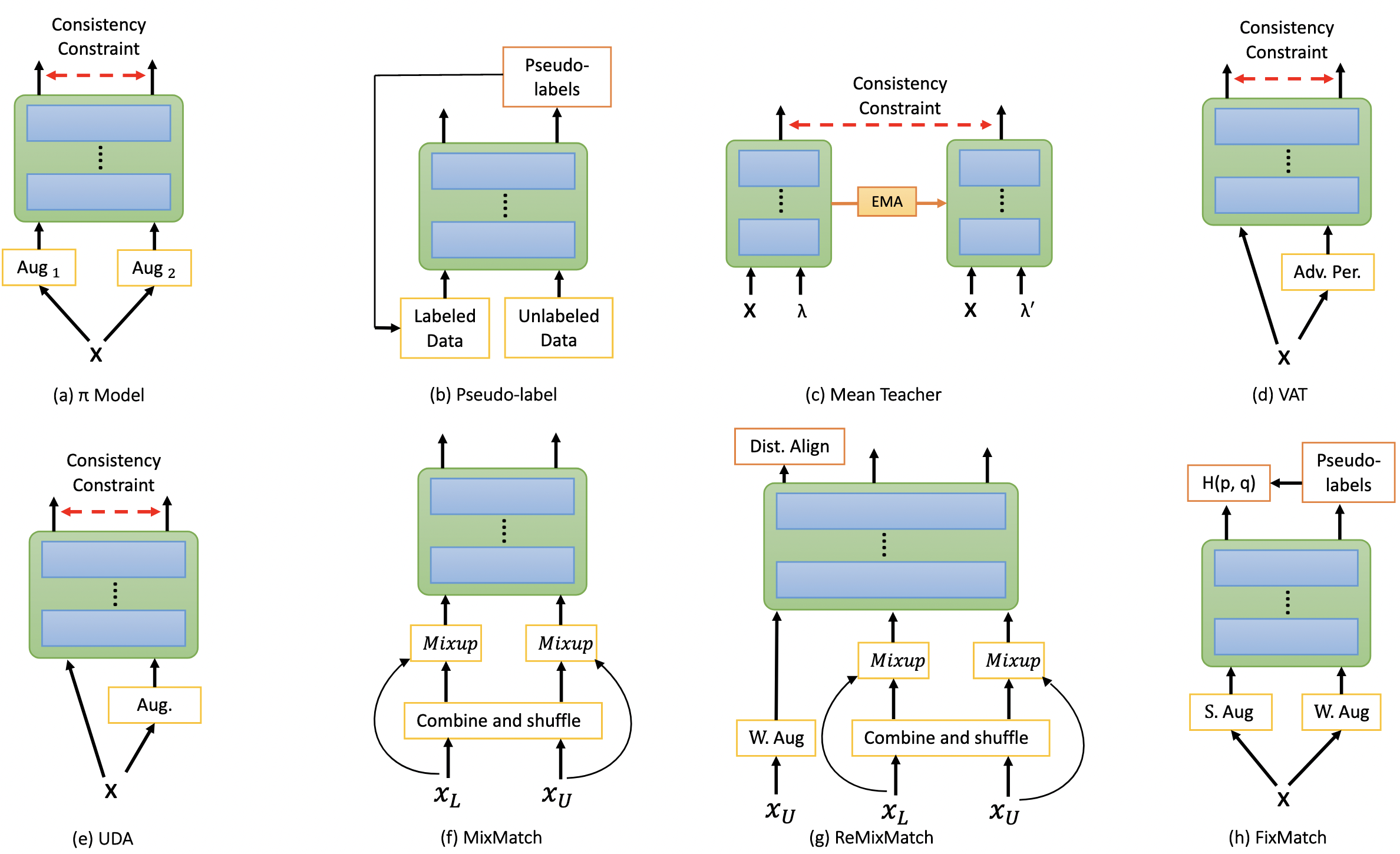

Shuvendu Roy, Ali Etemad IEEE Transactions on Affective Computing (IEEE TAFFC 2024) Paper | ArXiv | Code TLDR: This study evaluates 11 recent semi-supervised learning methods for facial expression recognition (FER) across diverse settings, including in-distribution, out-of-distribution, and unconstrained data. FixMatch excels with in-distribution data, while ReMixMatch performs best in challenging scenarios, showcasing the consistent benefits of semi-supervised learning over supervised methods. |

|

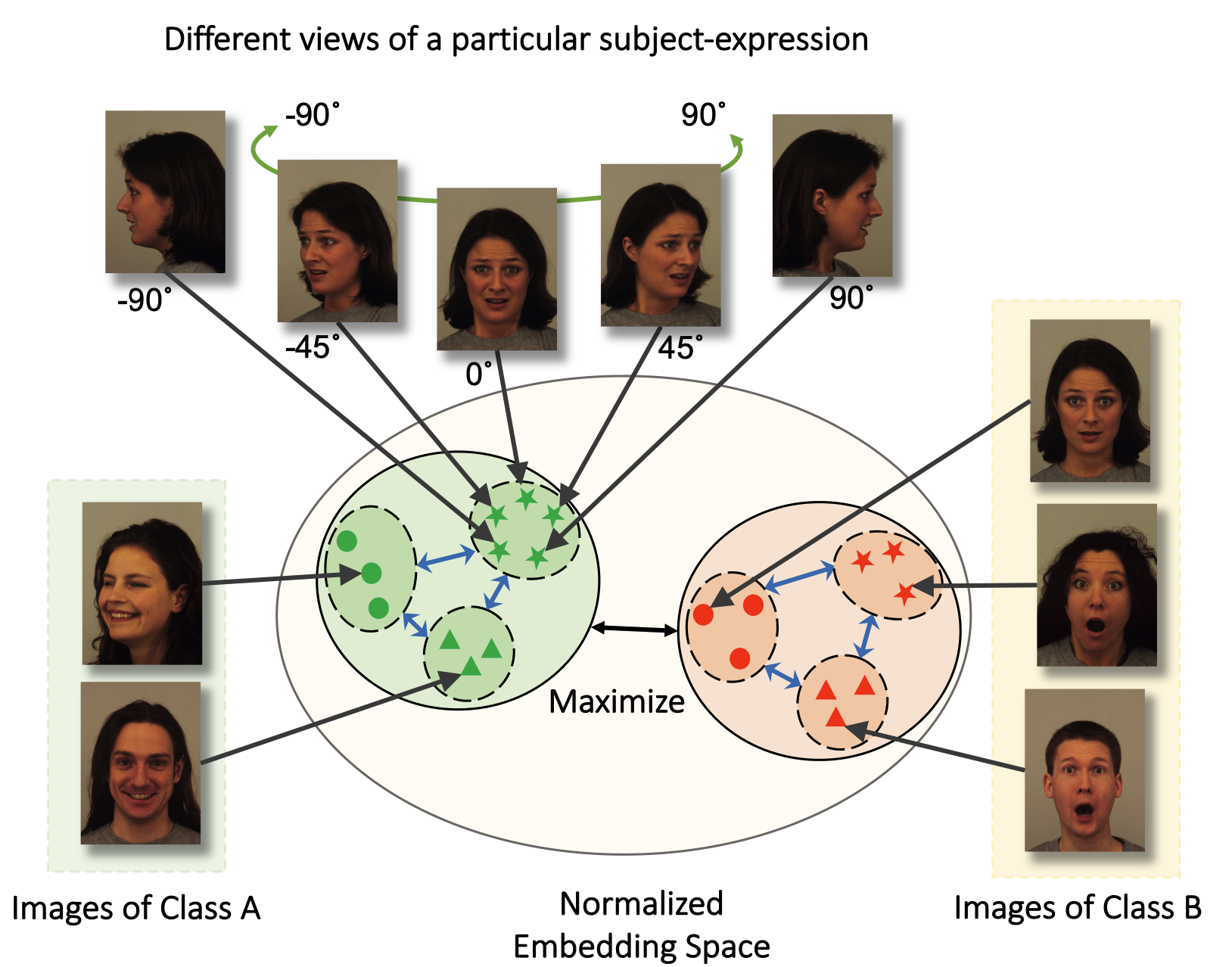

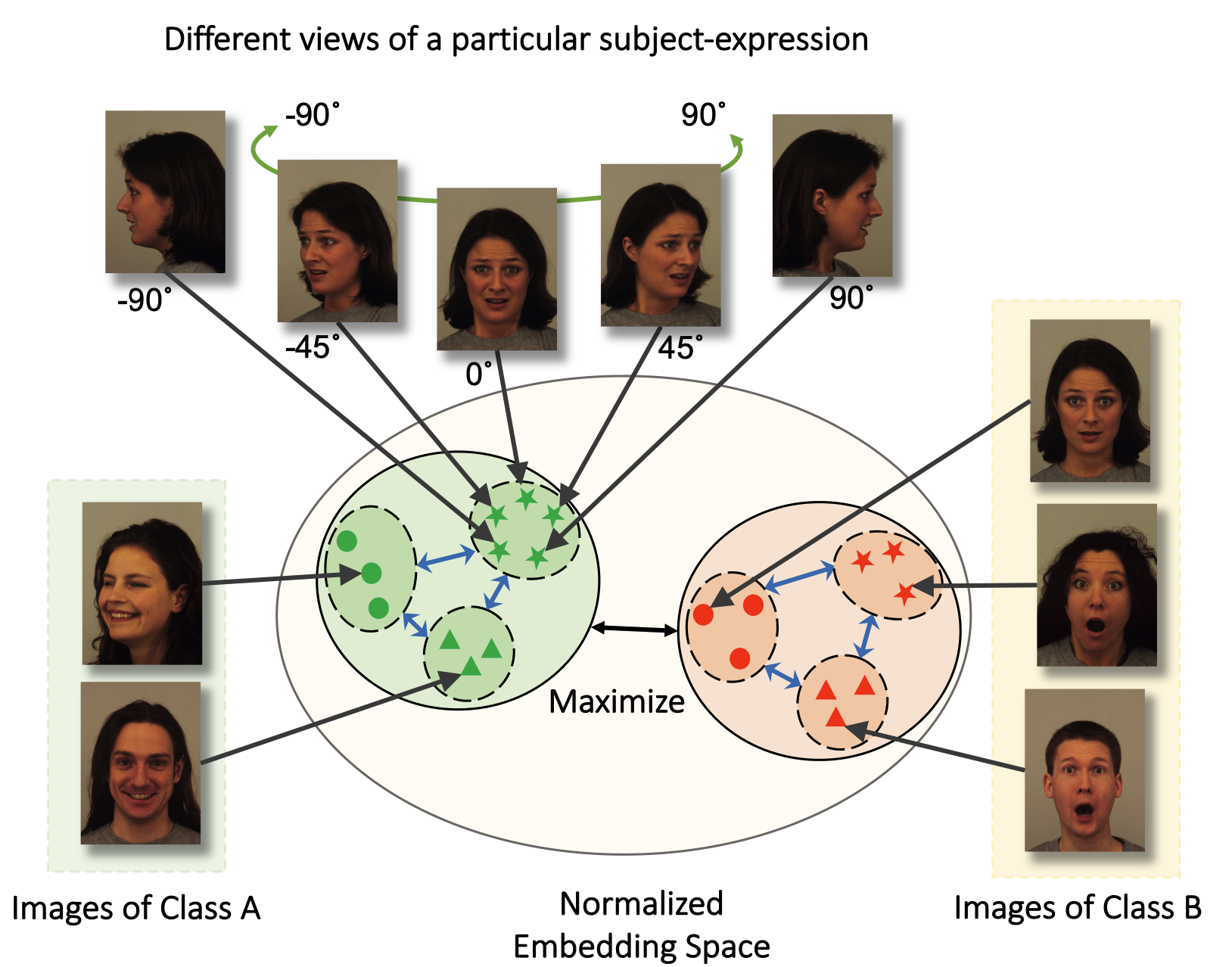

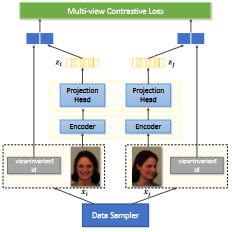

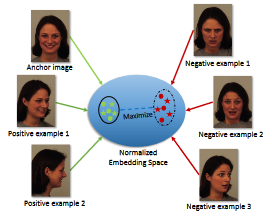

Contrastive Learning of View-invariant Representations for Facial

Expressions Recognition

Shuvendu Roy, Ali Etemad ACM Transactions on Multimedia Computing, Communications and Applications (ACM TOMM 2023) Paper | ArVix TLDR: Introducing ViewFX, a novel facial expression recognition framework that accurately classifies emotions from various angles. ViewFX uses contrastive learning to learn view-invariant representation of the expression, and enable understanding expression from any view angles. |

|

Active Learning with Contrastive Pre-training for Facial Expression

Recognition

Shuvendu Roy, Ali Etemad 11th International Conference on Affective Computing and Intelligent Interaction (ACII 2023) Paper | ArVix | Code |

|

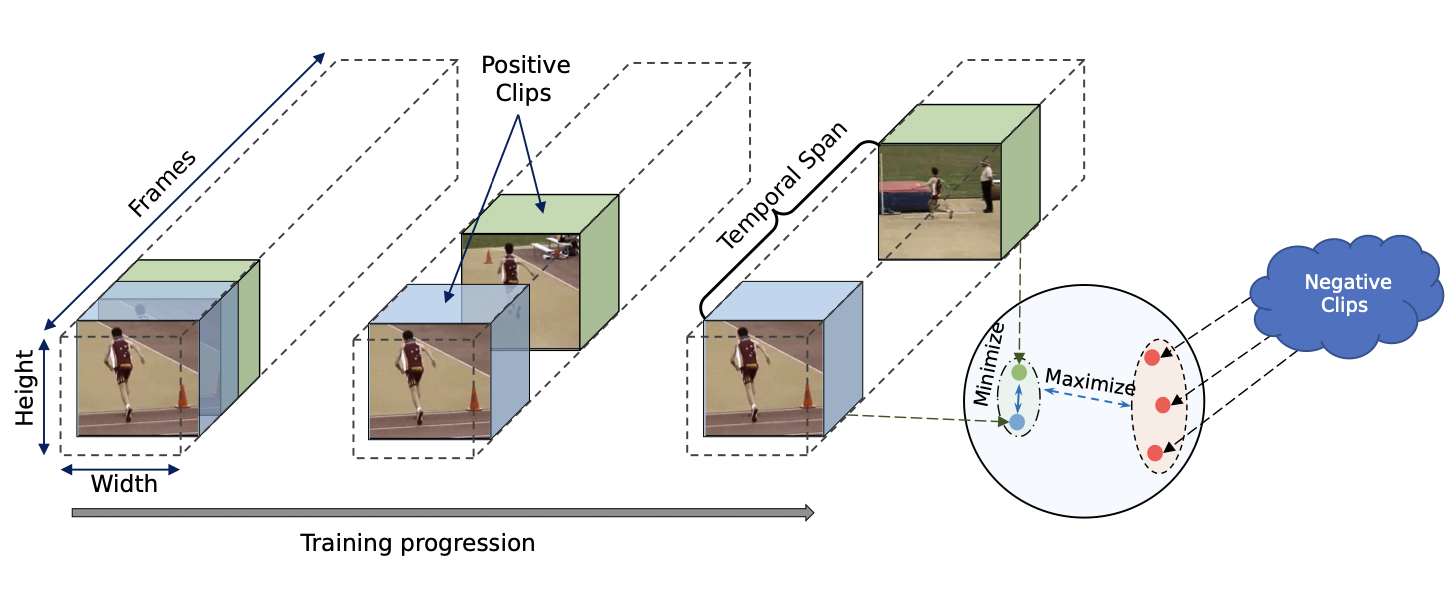

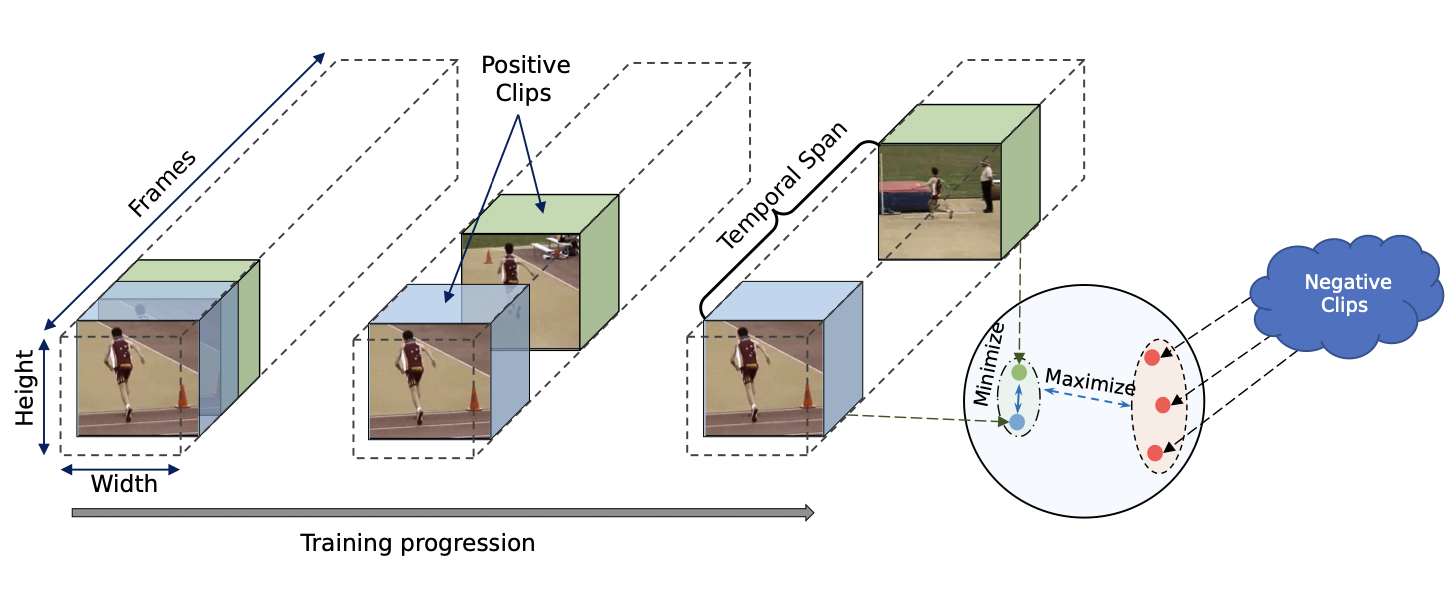

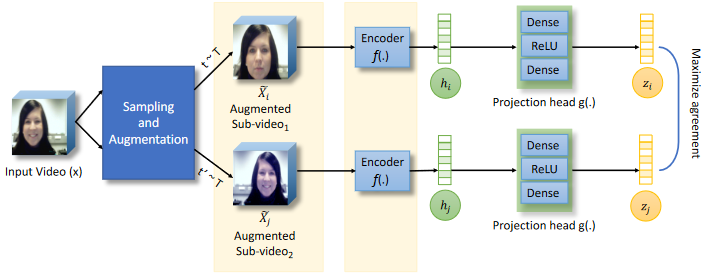

Temporal Contrastive Learning with Curriculum

Shuvendu Roy, Ali Etemad IEEE International Conference on Acoustics, Speech and Signal Processing, (ICASSP 2023) Paper | ArVix |

|

Analysis of Semi-Supervised Methods for Facial Expression

Recognition

Shuvendu Roy, Ali Etemad IEEE International Conference on Affective Computing and Intelligent Interaction (ACII 2022) Paper | Project Page | Code |

|

Self-supervised Contrastive Learning of Multi-view Facial

Expressions

Shuvendu Roy, Ali Etemad ACM International Conference on Multimodal Interaction (ICMI 2021) Paper | ArXiv |

|

Spatiotemporal Contrastive Learning of Facial Expressions in

Videos

Shuvendu Roy, Ali Etemad IEEE International Conference on Affective Computing and Intelligent Interaction (ACII 2021) Paper | ArXiv |

|

Facial Emotion Recognition Using Transfer Learning in the Deep

CNN

MAH Akhand, Shuvendu Roy, Nazmul Siddique, Md Abdus Samad Kamal, Tetsuya Shimamura Electronics 10 (9), 2021 Paper | Undergrad Thesis | Code | Third Party Implementation |

Academic Services

Reviewing

- Computer Vision and Pattern Recognition (CVPR), 2023, 2024, 2025

- International Conference on Machine Learning (ICML), 2025

- NeurIPS, 2025

- International Conference on Learning Representations (ICLR), 2025

- International Conference on Computer Vision (ICCV), 2025

- European Conference on Computer Vision (ECCV), 2022, 2024

- AAAI Conference on Artificial Intelligence, 2023, 2024, 2025

- IEEE Transactions on Pattern Analysis and Machine Intelligence (TPAMI)

- IEEE Transactions on Affective Computing (TAFFC)

- IEEE Transactions on Artificial Intelligence (TAI)

- Publons Profile

Awards

- First place in the Agentic Retrieval Grand Challenge (ACM-ICAIF ‘25), Oct 2025

- First prize in IEEE Research Excellence Award (PhD) from IEEE Kingston Section 2024

- Graduate Student Conference Award, Queen’s University, Canada, 2023

- Vocational Scholarship from Khulna University of Engineering & Technology for Academic Excellence, 2015, 2018

- Second place in ‘System Development Project Competition’, Khulna University of Engineering & Technology, 2018